Like it or not, the use of AI (Artificial Intelligence) has become a part of our daily lives. While you might not use AI directly (or you don’t know that you do) it is now a common part of society, especially in the online world. Many people, sites, can companies use it to create content. It is part of the “smart” gadgets that we use at home. Map software (like Google Maps), search engines, ride share apps, and even the spam filter on your email all use AI. You’re even more likely to encounter AI on social media and even standard media these days, with it being used to write articles and text, create ads, and images.

We know that there’s no getting around it these days. You’ve probably heard stories about how AI provides incorrect information, steals content, or might help the robots overthrow humanity today. While it seems to be the wild, wild west, there are a few (voluntary) safeguards in place now to prevent the overthrow of humanity (I hope). Where the real damage is right now is the use of AI to mislead people outright. There’s also some danger from just lazy use of AI where whoever is using it to create content just copies and pastes it verbatim without checking what it actually says.

Gardening misinformation on the internet is nothing new. Gardening misinformation before the internet is nothing new either. But the risk that AI poses is the amplification and multiplication of that information. It is now easier than ever for someone to create online content at the click of a button. And the way that AI works is it scours the internet for existing information to learn how to respond. This new(ish) generation of AI is generative, meaning that it can actually put together information to form something new. Previously, if you did an internet search you would just get a list of websites to read for information. Now AI can use those sites as source material and write the information in a new way – however you prompt it to do. Search engines like Microsoft’s Bing (the much-loathed replacement for the archaic Internet “Exploder”) now have AI built in as a feature. AI is only as smart as what it can learn on the internet, and we all know that while there is good information on the web it is also full of a lot of manure.

Identifying Artificial Gardening Information

First, how can you tell if it is AI generated? At first glance you might not be able to tell. It is just like any writing. There are tools you can use to try to figure it out, but you’ll often find that writing from AI doesn’t have personality. It is often very dry. You can tell it to add humor or any other style to its writing, but it often misses the mark. When I asked ChatGPT (one of the common AI platforms) to add humor to something it sounded like I was trying to be cutesy, like an employee at the famous park in the swamps of Orlando run by a mouse and not my usual wit, sarcasm, and cynicism. AI often fails to see context and answers are often very black and white. Which doesn’t work in dynamic systems like gardening and the environment. AI therefore sticks a lot with generalities and doesn’t often provide a lot of specific information unless you ask for it.

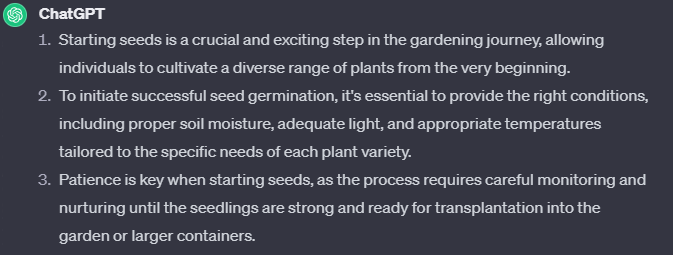

As an example, I prompted ChatGPT to write “three sentences about seed starting”. Here’s what it spit out:

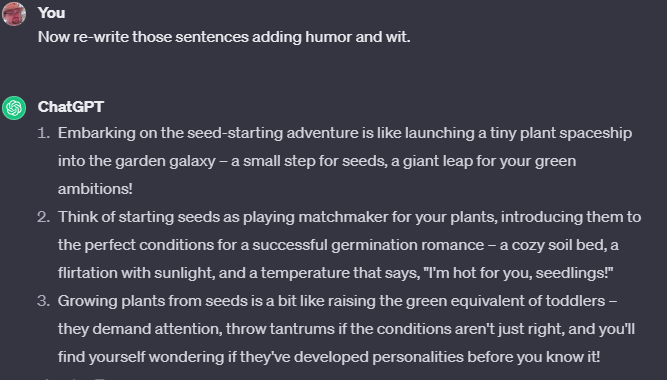

Nothing alarming there, but it is pretty dry and isn’t very informative. It isn’t what I would write. Now, I prompted it to rewrite those sentences, but to add “humor and wit”. This is what it vomited out:

Vomit, indeed. Though still nothing too alarming. Definitely too cute and not enough snark.

Where AI generated text falls short is that it incorporates some of that incorrect manure from the web in answers. It doesn’t know that information on the web is incorrect. A few times it told me to put rocks in the bottom of pots for drainage (something we’ve fiercely disproven time and time again).

It told me to practice companion planting (another fallacy we’ve taken on) but it never gave me a lot of details about how to do it.

I did ask it directly about biodynamic gardening, which is the pinnacle of garden misinformation. It gave an amazingly nuanced and diplomatic response, which is much closer to what I’d actually say and much nicer than what GP founder Dr. Linda Chalker-Scott would say. (Don’t tell her I said that).

So, nothing too earth shattering in text, but where I think the real risk lies is in AI generated images and videos. It is easier than ever to create images of things that aren’t possible or incorrect and pass them off as real. People often do this to drive traffic – by making wild claims that people must check out or by “rage baiting” people who just have to respond to tell people how wrong something is (it still drives engagement and earns money).

Fake images are nothing new in the gardening world. I can’t tell you how many ads I’ve seen for magical rainbow-colored rose seeds, trees that grow 10 kinds of fruits, and more all before the advent of AI. But now it is easier than ever to create those images at the click of a button.

For an example, I turned to DALL-E, which is a common AI Image generator. I tried to think of things that wouldn’t be possible. My first prompt was “monarch butterfly on a snow-covered flower”. Something that isn’t possible, but that someone might create to make a social media post about something amazing or miraculous that people have to see to believe.

The results look realistic(is) enough, though improbable. But you’d have to know that to not believe it.

The second test, not so much: “realistic looking tree that has 15 different types of fruits and veggies growing on it”. I had to add the “realistic” because the first results were cartoon-y. It didn’t help much. So, I guess my magical 15 fruit and veggie tree won’t be coming to an online scam shop any time soon.

So, I moved on and created “a grape vine covered with scary looking bugs”.

At first glance, the result can look terrifying. But if you inspect it closely, you’ll see that those bugs have all kinds of legs coming from all over their bodies. Scary, yes, but realistic – no. But could someone do something like this to scare people about an invading insect? Absolutely!

Cutting through the Artificial CRAP

GP Founder Dr. Linda C-S has written about using the CRAP test to identify if a source of information is trustworthy. She used it to talk about Jerry Baker, the self-appointed “America’s Master Gardener” who peddled misinformation and garden snake-oil for decades through books and tv shows to earn big bucks. The same principles can be applied now to digital content created by AI to help figure out if the information is reliable. Here are the steps:

C = credibility. What are the credentials of the person or organization presenting the information? Are they actual experts? Or is it a random account that doesn’t have ties to a credible source? Does the source have academic training, or even practical knowledge?

R = relevant. Is the information relevant for home gardeners? Or does it try to use information other than home gardening, like production agriculture, to answer the questions. For AI, especially images, I could also say that R= realistic. Is it something that could actually be true, or is it a monarch butterfly covered in snow?

A = accuracy. This could lend itself to the realistic assertion, but I see this as more in accuracy of the source of information. Does it site sources, like journal articles, extension publications, USDA reports, etc.? And does the information follow along with trusted information from other sources?

P = purpose. Why is someone presenting this information? In the Jerry Baker example, he was raking in money with books, TV shows, and product promotions. But what benefit does someone get from posting incorrect info on the web? Also, money. Whether you give them a dime, most social media sites and websites generate income by the number of clicks or viewers they have. How do you think people get rich and famous from TikTok? People aren’t paying them to watch them, but they generate income from engagement and interaction. So, creating content that is fanciful to get people to check it out, or even wrong for people to interact with it to rail against it, creates income.

Is all AI bad?

Not necessarily. I mean, the technology is applied in so many ways to solve so many problems. Sure, there is a risk and people do misuse it. But AI can be a powerful and useful tool when used appropriately, when information is checked, and when it isn’t copied and pasted directly. For example. Over most of 2023 I wrote a series of GP articles about plant diseases. No, I didn’t have AI write the article. That would have been wrong. But I did ask my friend ChatGPT to create lists of common diseases for each type of disease to write about. Instead of me having to dig through social media to see what people were asking about, the platform searched to see what the most common diseases that people talked or asked about were, or which ones were most likely to show up on websites. But I took that list, added to it, subtracted from it, and then wrote the article myself. But the more unethical (and lazy) users of AI just copy what it says verbatim without even reading or editing for accuracy. Or even have automated systems that just crank out AI-generated content with no oversight.

In the end, AI isn’t going away. So as savvy gardeners we just have to know what to look for to “spot the bot”. And always be ready with a shovel to scoop away the CRAP.

Thank you for this article. I plan to copy and paste it (LOL) and hope that others benefit from the time you took to write it.

I had to look up Jerry Baker. When I read your description of a self appointed garden guru who peddles snake oil, another name came to mind. It’s a problem when such people acquire false credibility through association with a generally respected information outlet.

Thank you John, very interesting – our technology is fast leaving me behind, so I continue to just dig in the dirt.. By the way, this site’s background picture is a very poor representation of blueberries…

I just asked Chat GPT if it could generate answers using only information form peer reviewed sources. It basically said “No”. Primarily because it has not had access to or been trained on any material which requires a subscription. I then asked if it could write a report using only sources with an edu extension. Again, the answer was “No”. It appears that using the currently available versions of AI is fraught with issues.

I am a member of the Annapolis Horticulture Society (Annapolis, MD) and I am sure our members would enjoy and get good guidance from this article of yours. Could you please tell me if that would be possible. Maybe just a link to it that members could go to. Thanks so much.

Letitia Iorio (letitiaiorio@gmail.com)

Anyone can access our blog. You can copy the url of the post itself, but I’d suggest interested people sign uo for notifications. We’ve been posting since 2009 and there are 1300+ great posts for people to look through.

Thanks

thanks for including Jerry Baker among the fake stuff! It’s amazing how many times he’s cited by a visitor to the Master Garden booth where I volunteer. My response is usually. “that’s a good book to recycle or use in the fireplace”. Get shocked looks, but after I explain, they agree with me!

Excellent essay! and I agree that Internet gardening = too much superfluous junk!

Even prior to any mention of AI, I’ve encountered so many useless garden blogs in my writing research for work, especially those for houseplants (which are not my specialty). These posts add absolutely nothing to available information, why do they exist??? I guess it is so necessary to generate “new” material to keep eyeballs on their blog that they are writing about individual plants and just copy-and-pasting their OWN posts since so many plants have identical needs for light and watering. Descriptions of color, texture, growth rate, and true dimensions are rarely listed – which would be helpful for my curious customers. If they let AI do the work, it’ll only generate more filler junk.